Help and Support > Table of Contents > Using the data profiler

Using the data profiler

The data profiler allows you to quickly access the data quality and contents of a data set anywhere on the datamartist canvas. To use the data profiler:

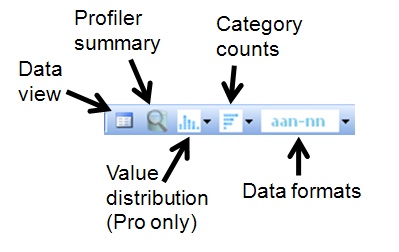

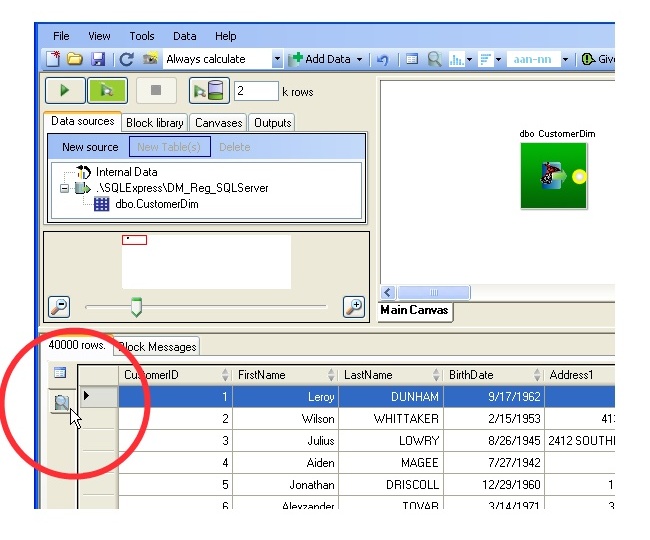

First, select a stub by clicking on either the stub circle on the block or on a connector line between blocks.

Then either Select one of the data profiling toolbar buttons above the data canvas:

OR Select the data profiler tab at the lower left of the screen, directly underneith the data viewer tab;

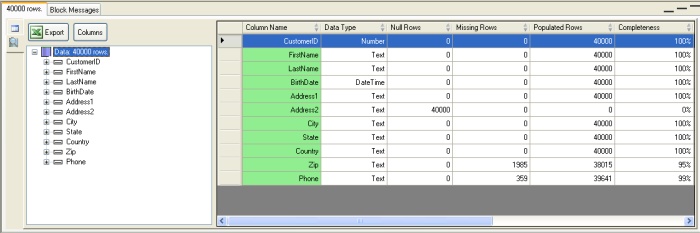

Depending on the size of the data set, you may be prompted to select which columns you wish to profile (for small sets all columns are simply profiled). For each column that is profiled, the following metrics are calculated:

- Column Name- the name of the column being profiled.

- Data Type - the declared data type for the column

- Null rows- the count of rows that contain the null value.

- Missing rows- for string types the count of rows containing the empty string.

- Populated rows- the count of rows that are not Null or missing.

- Completeness- the percentage of rows that are Populated (Populated/Total Rows).

- Cardinality- the number of unique values contained in the row.

- Uniqueness- the cardinality divided by the number of populated rows.

- Minimum- the minimum value within the column for numeric columns, the earliest date for date columns.

- Maximum- the maximum value within the column for numeric columns, the latest date for date columns.

- Average- the average all all values for numeric columns.

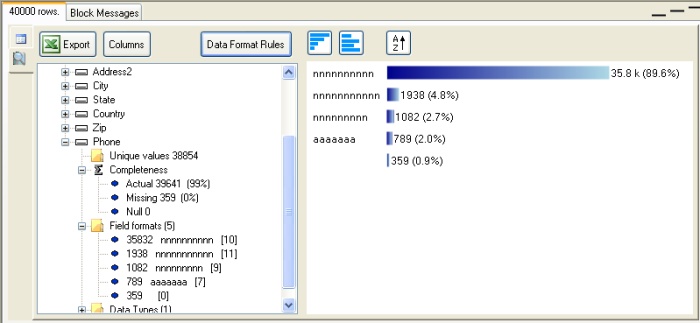

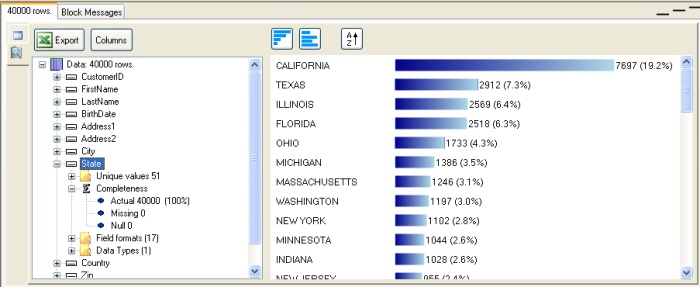

In the example below, a profile of a 40 thousand row customer table, we can see that while most columns are complete, Address2 is always null, and we are missing some ZIP codes and phone numbers:

By selecting the state column icon in the tree view on the left we can see the distribution of row counts by value for this column

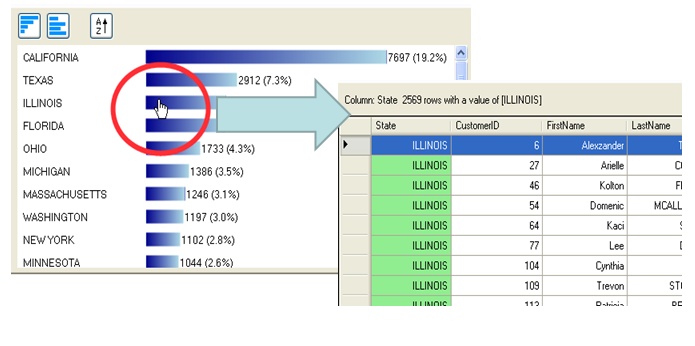

Drill Down

You can drill down into the rows by clicking on the bar in the column category count graphs.

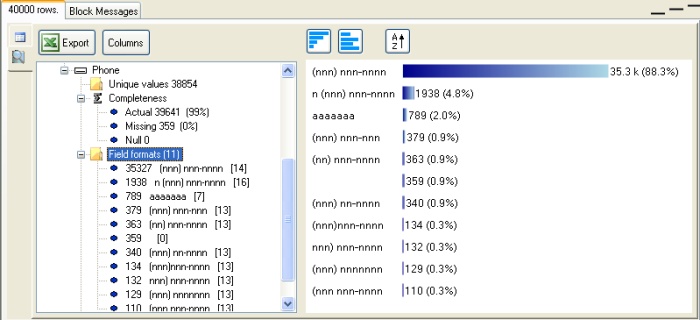

Field Formats

The data profiler also parses the values in each column using a format code- this allows you to at a glance understand many issues with poorly formed strings (such as Zip codes or Phone numbers)

In Datamartist Standard, the data format rules are fixed to a default, in Datamartist Pro, you have the additional ability to edit the rules.

The default rules are as follows:

- a- Denotes a letter of the alphabet

- n- Denotes a digit (0 to 9)

- s- Denotes white space (such as spaces)

- Punctuation is passed through to the format string so that you can see locations of brackets and dashes etc.

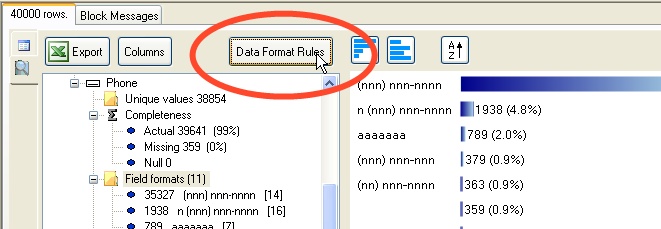

So if we drill down into the field format metric, we'll find row counts for each unique field format based on the above codes. A three digit number will appear as "nnn" for example, and a standard North American phone number with spaces would appear as (nnn) nnn-nnnn. We can see from this example, that we have some invalid phone numbers in our data:

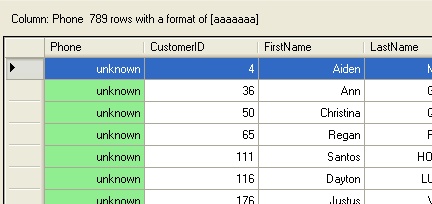

Again, you can drill down into the rows underlying each data format by clicking on the bar on the bar graph. If we click on the "aaaaaaa" we find that many rows have the word "unknown" as a value.

Datamartist PRO- field format rule editing

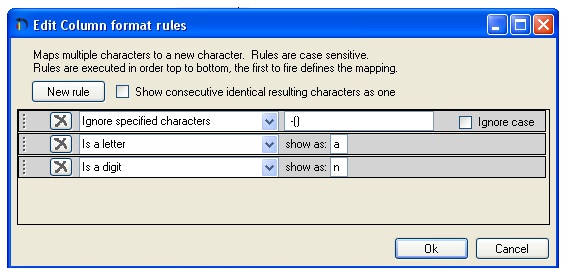

In the professional edition of Datamartist, an additional button is present that allows the default data format rules to be modified.

In this example, we could ignore brackets and dashes, and adjust the rules to be more specifically focused on the digits in the phone number. To do this, we add rules. The rules are tested starting from top to bottom, and the first rule to be fired determines the action taken for each character. In this case, we add a rule to ignore spaces, dashes and brackets:

Adding this rule results in a much smaller set of data formats;